* All product/brand names, logos, and trademarks are property of their respective owners.

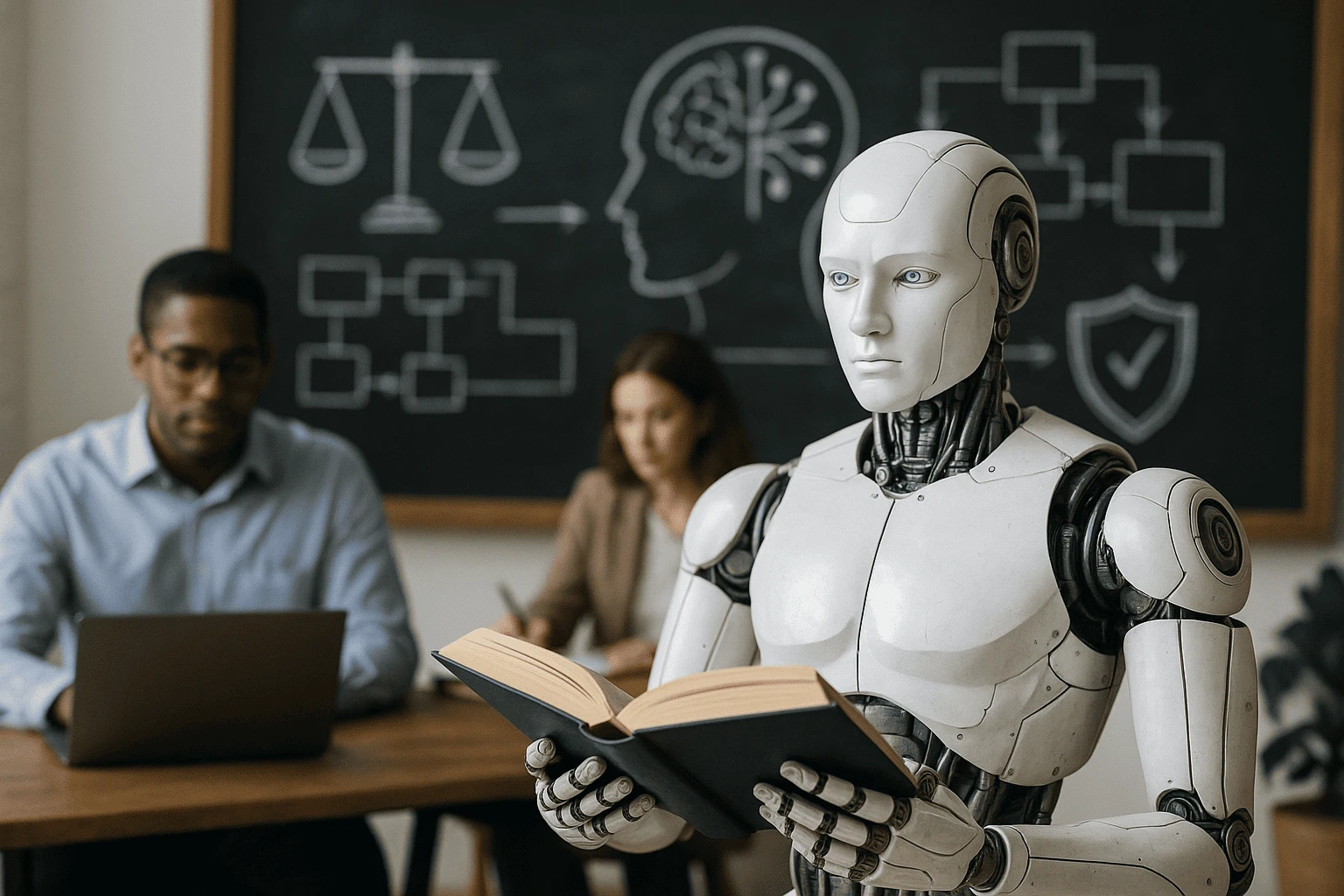

In today’s rapidly evolving world of artificial intelligence, the question is no longer whether we can build powerful AI systems — it’s whether we should. As AI becomes deeply woven into healthcare, finance, law enforcement, education, and even governance, the risks of unregulated, biased, or harmful technology are too big to ignore. That’s where AI ethics frameworks come in.

These frameworks are not just academic theories or lofty ideals. They are structured sets of principles, rules, and best practices designed to make AI more fair, transparent, accountable, and aligned with human values. And in 2025, with governments and global organisations pushing for stronger AI governance, understanding these ethical foundations has become more than a nice-to-have — it’s a must.

Whether you’re a developer writing machine learning models, a business leader implementing AI into workflows, or simply a curious mind trying to make sense of our AI-driven future, grasping the essentials of ethical AI is vital. Global bodies like UNESCO, OECD, and standards groups like ISO/IEC are already shaping policy and technical standards. Big Tech is setting internal frameworks. Countries like Pakistan are building national AI policies to ensure local AI innovation stays responsible and human-centred.

The must-know AI ethics frameworks that are influencing how AI is built and governed globally. We’ll explain them in simple, accessible language — no jargon, no fluff — and show how they matter to you, whether you code, manage, or care about AI’s role in society.

At their core, AI ethics frameworks are structured guidelines designed to ensure that artificial intelligence is developed and used responsibly. They combine philosophical principles, human rights standards, and practical best practices into clear directions that guide how AI systems should behave — and how humans should build them.

Think of these frameworks as a moral compass for AI. They help organisations and developers answer tough questions like:

Is this AI system fair to everyone, regardless of gender, race, or location?

Does it protect user privacy?

Can people understand how decisions are made?

Who is accountable if something goes wrong?

These aren’t just theoretical concerns. When an AI-powered hiring tool rejects candidates based on biased data, or a facial recognition system misidentifies people, real harm happens. AI ethics frameworks aim to prevent such issues by embedding ethical checks into every stage — from data collection and algorithm design to deployment and monitoring.

Most frameworks include pillars like:

Fairness – avoiding bias and discrimination

Transparency – making AI decisions understandable

Accountability – knowing who is responsible

Privacy & Security – protecting personal data

Human Oversight – keeping humans in control

The push for ethical AI isn’t just happening in tech companies or research labs — it’s a global movement. Major international organisations have launched their own AI ethics frameworks in response to rising concerns about automation, bias, surveillance, and inequality.

For example:

UNESCO adopted a global Recommendation on the Ethics of Artificial Intelligence — the first international standard approved by over 190 countries. It covers everything from data governance to environmental impact, and urges countries to put ethical principles into national AI laws.

The European Union introduced the AI Act, focusing on regulating high-risk AI systems and requiring transparency, human oversight, and risk management.

The ISO/IEC 42001 is a newly launched international standard for AI management systems. It gives organisations a structured way to align their AI development with ethical goals, ensuring responsible design and deployment.

These efforts signal a clear message: AI must be built responsibly, or it risks causing serious harm.

For countries like Pakistan, these frameworks provide not just guidance but a benchmark. As local startups, businesses, and public institutions begin to use AI, aligning with global ethics standards ensures the technology uplifts rather than divides.

Understanding the AI ethics landscape means knowing who’s setting the standards — and what those standards are. Here are the most respected and widely adopted global AI ethics frameworks that are influencing both policy and practice.

In 2021, UNESCO made history by adopting the world’s first global agreement on AI ethics, signed by over 190 countries. This framework isn’t just symbolic — it’s a roadmap for national AI strategies and regulations.

Key highlights include:

Human Rights Focus: AI must respect human dignity, freedom, and diversity.

Data Governance: Encourages transparency, data privacy, and secure data handling.

Sustainability: Highlights AI’s environmental impact and promotes green technologies.

Accountability Mechanisms: Urges countries to ensure legal and institutional safeguards.

Why it matters:

UNESCO’s framework is global and inclusive, built through a multi-stakeholder process. For countries like Pakistan, it offers a baseline to develop ethical AI policies that align with international best practices.

The OECD Principles on AI, adopted by 46 countries (including the US and most of Europe), emphasize five core values:

Inclusive growth, sustainable development

Human-centred values and fairness

Transparency and explainability

Robustness and safety

Accountability

These principles are voluntary but widely referenced, especially by policymakers, and are now influencing hard regulations like the EU AI Act.

In parallel, the ISO/IEC 42001 standard — published in 2023 — gives companies a certifiable AI management system, similar to how ISO 9001 is used for quality management.

Why it matters:

This standard makes ethical AI measurable and implementable. It’s especially useful for tech firms, government bodies, and enterprises adopting AI across operations.

While governments and institutions create formal policies, tech giants — who actually build and deploy large-scale AI — have developed their own internal ethics guidelines.

Microsoft’s Responsible AI Principles include:

Fairness

Reliability & safety

Privacy & security

Inclusiveness

Transparency

Accountability

Similarly, Google’s AI Principles emphasise:

Avoiding bias and harm

Being socially beneficial

Ensuring accountability

Upholding high privacy standards

Why it matters:

These frameworks directly influence how AI tools — from chatbots to search algorithms to facial recognition — are built. As Microsoft and Google AI products are used globally, their internal ethics models often serve as industry benchmarks.

Understanding ethics frameworks is one thing — implementing them is another. Here’s how developers, business leaders, and even policymakers can bring these principles to life in day-to-day decisions and workflows.

If you’re building AI systems, you’re on the front lines of ethical decision-making. It’s not just about writing clean code — it’s about thinking through the social impact of what you’re creating.

Here’s how developers can apply AI ethics frameworks in practice:

Bias Testing in Data

Before training any model, assess your dataset for bias. Use fairness libraries like IBM’s AI Fairness 360 or Google’s What-If Tool. Ask: does the data represent diverse groups fairly?

Transparent Models

Where possible, choose interpretable models. If you must use black-box systems (like deep learning), provide explanation layers or visualizations to show how decisions are made.

Privacy by Design

Integrate privacy-preserving techniques like differential privacy, federated learning, or anonymisation at the architecture level.

Document Everything

Follow "model cards" or “datasheets for datasets” — standardised documentation practices that explain how your model was built, tested, and its limitations.

Ethical Peer Review

Include ethicists or diverse reviewers in code audits. Challenge assumptions and surface unintended consequences early.

Being ethical as a developer doesn’t slow you down — it makes your product more robust, trusted, and future-proof.

Business leaders and managers may not code, but they shape how AI is used, funded, and deployed. Their role in AI ethics is critical.

Here’s how leaders can align with global frameworks:

Create an AI Ethics Charter

Define your organisation's principles based on existing frameworks (like UNESCO or ISO 42001). Publish this internally and externally.

Set Up Ethical Review Boards

Establish an internal team to review AI initiatives before launch — think of it like a legal or compliance review, but for ethics.

Invest in AI Training

Make sure employees understand AI risks and ethical considerations — especially product teams, data scientists, and marketers.

Vendor Ethics Checks

If you’re buying AI tools, audit vendors for their ethical practices. Ask: do they follow responsible AI guidelines? Are they transparent about risks?

Policy Alignment

Keep up with regulations (like the EU AI Act or Pakistan’s future AI policies). Ensure your AI practices are legally and ethically sound across markets.

Leaders who embrace ethical AI are not only protecting reputation — they’re building long-term trust and resilience.

Pakistan is on the brink of major AI growth, with initiatives like the National AI Policy 2025 aiming to scale innovation responsibly.

How AI ethics applies in Pakistan:

Policy Foundations Are Emerging

The government is working on data governance, legal safeguards, and public awareness — ethics will be a key part of AI infrastructure.

Local Innovation Needs Global Guardrails

Startups in Pakistan are exploring healthcare, agriculture, and fintech AI — areas where ethical risks are real (e.g., biased diagnostics or financial exclusion).

Bottom line: Pakistan’s AI journey is just beginning — and ethical design today will shape public trust tomorrow.

As artificial intelligence becomes more powerful, its influence over our lives grows deeper — and so do the ethical stakes. The rise of AI ethics frameworks isn’t just a trend; it’s a global necessity. From massive data models to everyday automation tools, the demand for responsible, explainable, and human-centered AI is louder than ever.

We’ve seen that ethics in AI isn’t a vague moral idea — it’s a structured, actionable framework. Whether it’s UNESCO’s global agreement, OECD’s guiding principles, or industry standards like ISO/IEC 42001, the world is building a shared language of AI responsibility. Even Big Tech is now forced to define and defend their ethical positions publicly.

For developers, this means building models that respect fairness, transparency, and privacy — not as afterthoughts, but as core design features. For leaders, it’s about putting strong governance in place and ensuring every AI-driven decision aligns with both company values and international standards.

And for curious minds — those exploring how AI will shape their future — the message is simple: knowledge is power. Understanding these frameworks helps you question, engage, and influence how technology evolves in your community and beyond.

In countries like Pakistan, where AI adoption is just accelerating, there's a powerful opportunity: to bake in ethics from the start. Rather than chasing global trends, Pakistan can help set them by combining innovation with inclusive, principled design.

So what’s next?

If you’re building AI, ask how your system impacts real people.

If you’re managing teams, embed ethics into every workflow.

And if you’re simply curious — keep asking questions. Because ethical AI isn’t just about machines. It’s about us.

I am Zeenat, an SEO Specialist and Content Writer specializing in on-page and off-page SEO to improve website visibility, user experience, and performance.

I optimize website content, meta elements, and site structure, and implement effective off-page SEO strategies, including link building and authority development. Through keyword research and performance analysis, I drive targeted organic traffic and improve search rankings.

I create high-quality, search-optimized content using data-driven, white-hat SEO practices, focused on delivering sustainable, long-term growth and improved online visibility.

Artificial Intelligence (AI) and Machine Learning (ML) are no longer just buzzwords — they&rsq

23 January 2026

From digital assistants that schedule your meetings to algorithms that can write stories or detect d

13 January 2026

Learn Machine Learning Without the Overwhelm: Machine learning might sound like something reser

1 December 2025

Be the first to share your thoughts

No comments yet. Be the first to comment!

Share your thoughts and join the discussion below.